VMware vSphere Hypervisor (ESXi 4.1) Test Lab Configuration Notes

2012-06-20 Updated

2010-12-22 Initial Post

I've never had a chance to work with any version of VMware at work. My last company was so far behind in its IT strategy that even in late 2009 it had absolutely no virtualization strategy at all. I did manage to set up one Hyper-V server for them so that one department could use it for software testing. Where I work now, they do use VMware, but they don't use them on any of the messaging servers that I support. So once again, I have no chance to work with VMware.

I recently learned that I really need to know VMware to land another IT job. In the summer/fall of 2010 I had three second-level job interviews. The first one I didn't care for and didn't learn much from that interview. The last two interviews I learned that I really needed to get up to speed on VMware.

Knowing that ESXi would not run on either of the Dell desktops I had, I bit the bullet and spent around $1,500 US to get a new Dell PowerEdge T110 in December 2010. I needed to get a new server anyway because the Hyper-V desktop I had could only support max 4 GB RAM and I needed to start testing Exchange Server 2010 which required more VMs. I couldn't find any decent desktop that supported more than 4GB RAM and Dell had a great deal on the T110, so it worked out. The base T110 configuration with an upgrade to Intel Xeon X3440(I chose this because it has HyperThreading, which creates 2 more logical CPUs, resulting in 8 logical CPUs total on a quad-core) and Broadcom 5709dual NICs costs only $628 US. I then bought more RAM and an SSD/HDDs from CDW and NewEgg.

I've been reading up on ESXi 4.1 since a few weeks before I got the server and have learned quite a bit about it so far. I made three postings below to document some of my findings. I've been finding more interesting things as I continue to setup the server, so I decided to create this one post here to document everything else that I learn from now on.

- Wireless Networking Workaround On VMware ESXi and Hyper-V Hosts Via DD-WRT Client Bridge Mode On A Linksys WRT54G v5

- Install and Run VMWare ESXi 4.1 From USB Thumb/Flash Drive

- Install VMware ESXi 4.1 (VMware vSphere Hypervisor) on Hyper-V VM (I Tried And Didn’t Get It To Work)

Here are some things I've learned in the last few days:

>> It doesn't look like ESXi can access any storage other than direct/network/SAN-attached VMFS formatted storage. It can access NFS shares though, but you'd need to set up an NFS server for that.

>> There's no easy way to allow ESXi access to files on any other storage device other than ones that the server can see and has created datastores on. I did see some postings with hacks for mounting NTFS or FAT formatted USB drives. The reason I even looked into this was because I wanted to copy some VHDs from Hyper-V. I found a way to copy the VHDs, which was to install vSphere Client on my Hyper-V server and use it to browse the datastore on ESXi and copy the files over. That worked fine, although it was slow because I had to go over the network. I learned about that from this VMware KB.

>> I didn't see any way in Windows 7 to access a VMFS volume (I have Windows 7 installed on the same server so I can manually dual boot between it and ESXi). So it looks like there's no easy way for Windows and ESXi to transfer files other than through the network with something like NFS or via vSphere Client.

>> VMware vSphere PowerCLI is a PowerShell-based CLI to manage vSphere. There's also another CLI, vSphere Command-Line Interface that seems to do similar things, but it looks like PowerCLI is newer and probably is, or will become, more feature-rich since it's based on PowerShell.

>> StarWind Software has a free converter, StarWind V2V Converter, which basically allows you to convert from one virtual hard disk format/image to another. I tried this on all five Hyper-V VHDs that I had and converted them to VMDKs. Although the conversions completed with no error, so far two of the VMDKs (Windows 7 x86 and Windows Server 2003 R2 x86) don't work in ESXi. I'm not even going to bother testing the remaining three. I did try editing the VM to use different virtual hard disk and network controllers which didn't help. Basically both VMs blue screened on startup. What a dummy I am for wasting time converting all those VMs; I should have confirmed that the first one worked before proceeding to do the rest.

>> Next, I decided to give VMware vCenter Converter Standalone a try and so far it's been a pain. I made two educated guesses about this software: 1) It would be more efficient to install the converter directly on the Hyper-V server, and 2) I would be able to browse to a VHD file directly on the server and have the program convert it. Well, it turns out that I have to actually connect to a Hyper-V server to find the VHDs to convert. So when I connected the server to itself I got prompted about installing an agent and then after that I got an error "Another version of the product is already installed." Duh! Ok, so I installed the converter on my Windows 7 laptop and it's not able to connect to the Hyper-V server. I've disabled the firewall on the server, which didn't help. I'll have to try a few more things.

>> There's a VMware Converter boot CD for physical to virtual conversions. It looks like it requires a license.

2010-12-27

>> The VMware converter ended up working pretty well. I had disabled the firewall for the wrong network profile on the Hyper-V server, so that's why the convertor couldn't connect to it. Another option is to disable the Windows Firewall service; I recommend setting the service to disabled and then rebooting. I had tried stopping the service and it hung up the server's network connection and I couldn't RDP into or ping the server.

>> A few things about the VMware converter:

- It finds all the VMs on the Hyper-V server and lists them for you to choose. The one downside is that you still cannot select a VHD directly--only actual VMs can be selected. I had a VHD which I didn't have VM configured for, so I had to set up a VM for it, which just takes a minute or two.

- You can rename the VM before conversion. The converted VMDK file will be named the same as the VM.

- You can select the destination VMware server and will need to authenticate to that server. After that you can select the datastore and VM version format. One thing that sucks is that you cannot select a folder, only the root of the datastore. The conversion will create a folder for each VM though, but it would be nice if you could select a parent folder to put all the converted VMs into.

- You can edit some settings before the conversion starts. Some interesting settings are the ability to edit the VM's services startup mode (the converter will list all Windows services on a Windows VM). You can also select to install VMware Tools.

>> VMware Tools is software that gets installed on the guest. It allows the VM host and guests to interact with each other. It's basically the same thing as Hyper-V Guest Components/Integration Services.

>> For a 20 GB Windows 7 Ultimate VHD, with both servers on wired 100 Mbs Ethernet to the same switch (Actiontec router), the conversion took 24 minutes. For a 20 GB Windows Server 2008 R2 Enterprise x64 VHD, it took 23 minutes. For the conversions of Windows 7 and Server 2008, there was this message in the log: Warning: Cannot find a system volume for reconfiguration. The VM worked fine afterwards, so the warning can be ignored. I did not get that warning for XP or Server 2003.

>> With the free version of vSphere Client/ESXi, there is no option to clone a VM. OK, that's fine, but the help system has a section for "Clone a Virtual Machine to a Template." This was annoying because I thought I was doing something wrong when I tried to clone my converted VMs. Since that section is in the help system, why would I think that it wouldn't work? From what I could find, that feature is not available with the vSphere Client, only vCenter Server (which you need to pay for).

>> If you want to "clone" a VM, copy the VM's VMDK and VMX files to a new folder, then right-click on the VMX file (this is the text file that stores the VM's configuration) --> Add to inventory. I found the instructions for doing that here. Note that you cannot rename a VMDK file, so make sure to give the VMDK a generic name to begin with, such as zz_Template_XP.VMDK. Fortunately, I had my VMs named like that on Hyper-V, so when I "cloned" the converted VMs, that name carried over to the VM and VMDK file names.

>> A VM's swap file gets created, even if its RAM setting is not overcomitted. As soon as the VM is started, a swap file for that VM is created. The swap file is named in the format <VMDK file name without the .vmdk part>-<8 character hex number>.vswp and is the same size as the VM's RAM setting (2 GB RAM = 2 GB .vswp file). You can set a default swap file location for all VMs and also overwrite that setting for each VM. I chose to have all the swap files in one default location. Hyper-V works pretty much the same way.

>> The automatic VM startup/shutdown settings are not within the VM's settings, but centralized within the host's Virtual Machine Startup and Shutdown settings. See this VMware KB for more info.

>> I was trying to set up an automatic start order and it was not very intuitive. I'm not the only one who was stumped, as this post shows. Per the help system: Use Move Up and Move Down to specify the order in which the virtual machines start when the system starts. OK, but who would think that moving it up or down could also move it to a different section? Duh!

>> The help system doesn't even state this, but in the Virtual Machine Startup and Shutdown settings, it states "During shutdown, they will be stopped in the opposite order." This is good to know for settings up delays for cluster node startup and shutdown.

>> One thing I don't like about VMware's startup/shutdown settings is the startup delay. In Hyper-V, a VM's startup delay was relative to the VM host, not relative to a preceding VM guest. Also, the startup/shutdown settings in Hyper-V can be configured at the individual VM guest level.

>> There's a "free" backup utility called ghettoVCB that "allows users to perform backups of virtual machines residing on ESX(i) 3.5/4.x servers using methodology similar to VMware's VCB tool." I thought I could mess with this, but upon further reading I found that it requires features only available in paid versions of ESXi.

>> VMware has something called vSphere Management Assistant (vMA), which is "a virtual machine that includes prepackaged software such as a Linux distribution, the vSphere command‐line interface, and the vSphere SDK for Perl. Basically it is the missing service console for ESXi." I haven't tried this yet. I wouldn't be surprised if this is another feature that doesn't work with the free version of ESXi. Also see http://www.vmware.com/support/developer/vima/.

>> Before installing vSphere PowerCLI, I wanted to make sure I had the required version of Microsoft .NET Framework (.NET 2.0 Service Pack 1). Geez, how can something so simple take so long to figure out??? After reading several articles, it turns out that if you have .NET 3.5 SP1 (this is the version that ships with Windows 7 and Server 2008 R2), it includes .NET Framework 2.0 SP2. See http://en.wikipedia.org/wiki/.NET_Framework_version_history#.NET_Framework_3.5. Also see http://alt.pluralsight.com/wiki/default.aspx/Keith/DotnetVersionWiki.html.

>> I was able to use PowerCLI to connect to my ESXi server and list the VMs on it. That was a good start. I thought I could use it to create a PowerShell script (.PS1 file) to reboot or shutdown the server so I don't have to log in with the vSphere Client to do that. After over an hour of debugging my PowerShell script, the last error I got had something about "RestrictedVersion." Guess what? This is something else that can't be done with the free versions. Geez, not again!!! So basically you can use PowerCLI to connect to the free ESXi and perform "read" commands only. Any "write" command, or any command that basically changes the configuration or state of the server or VMs, cannot be done against the free version of ESXi. See http://communities.vmware.com/message/1411805.

>> Regardless of the limitations of PowerCLI, I did welcome the knowledge gained from learning to write a PS script. When writing a PS script, cmdlets from snap-ins (which are basically add-ons to the set of core PS cmdlets) need to be referenced by adding a line of code to include the snap-ins, eg, Add-PSSnapin VMware.VimAutomation.Core. VMware.VimAutomation.Core is the snap-in that contains the VMware-specific cmdlets included with vSphere PowerCLI. If you don't add the snap-in, you'll get an error like this when you try to use a VMware cmdlet in a script: The term 'Connect-VIServer' is not recognized as the name of a cmdlet, function, script file, or operable program. Check the spelling of the name, or if a path was included, verify that the path is correct and try again. If you open up PS via the shortcut for VMware vSphere PowerCLI, that automatically includes references to the VMware snap-in. See p. 8 of vSphere PowerCLI Administration Guide and http://technet.microsoft.com/en-us/library/dd347601.aspx.

>> To execute a PS1, right-click on it --> Run with PowerShell.

>> The new Integrated Scripting Environment (ISE), which was a new feature in PS 2.0 is slower to open compared to Notepad++.

>> Openfiler Setup - I've been trying to set up Openfiler so that I can eventually set up shared iSCSI LUNs for a Windows Server 2003/Exchange Server 2003 Microsoft Cluster Server. They have a preconfigured VM which I downloaded, but I could not fully extract the files (I used ExtractNow). There were other users who've had the same issue per https://forums.openfiler.com/viewtopic.php?pid=8939. I decided not to waste any more time on that and downloaded the ISO Image (x86/64) to install from scratch. I had read the installation instructions before, so I knew that it wouldn't take long to install from scratch. I set up a typical Red Hat Enterprise Linux 5 (64-bit) VM per instructions at http://www.vmwarehub.com/Openfiler%20Configuration.pdf (even finding this info--which VM guest OS type to select--took a few searches). The only difference was that I added a second NIC (for the dedicated iSCSI subnet/vSwitch). After the install completed and the server rebooted, the production and iSCSI NICs got switched around in Openfiler, ie, network adapter 1 (in VM setting) showed up as Eth1 (in Openfiler LINUX) and network adapter 2 showed up as Eth0. So to troubleshoot, I tried the following:

1. Shut down the VM and then switched around the networks assigned to the NICs in the VM settings. After reboot, Openfiler used the iSCSI IP address for the administration interface. I swapped the IP addresses between Eth0 and Eth1 from the console, using LINUX commands from http://www.cyberciti.biz/faq/linux-change-ip-address. After reboot, Openfiler still used the iSCSI IP address for the administration interface!

2. Removed all NICs, shutdown the VM, and then restarted.

4. Shut down the VM, added one NIC and restarted.

4. Configured the one NIC for the production subnet, from the LINUX console. After that Openfiler worked fine until I added the second NIC, which caused the same initial issue with the NICs getting switched around.

The fun never ends. I've reinstalled Openfiler TWICE now and still cannot get the NICs set up correctly. So basically I cannot get Openfiler to work correctly with two NICs. This has been very frustrating, and I might eventually be able to resolve the issue, but I don't want to waste any more time. As popular as Openfiler is, I just can't seem to find any really good documentation on it. I did manage to get a copy of the Openfiler 2.3 Administrator Guide but the darn PDF was not OCR'd and is not searchable. If you take a look at the table of contents, you'll see that it's not searchable either. I might be able to find a resolution to the NIC issue in the guide, but since it's not searchable, doing so will take longer.

At this point, I'm going to look into FreeNAS. From what I read, FreeNAS isn't as feature-rich as Openfiler, but I don't really care because I just need to create some basic shared iSCSI LUNs, which FreeNAS looks like it can do. I don't really care for any other features. Also, there appears to be some decent instructions at Simon Day's blog for setting up FreeNAS within a VM. We'll see how this goes . . .

>> Default Shutdown Delay: I needed to set a shutdown delay (I made it 300 seconds/5 minutes) because I noticed in the system logs of the VMs that they had dirty shutdowns whenever I rebooted or shutdown the host. I’m really surprised that I had to set this even though I have VMware Tools on all my Windows guests. I never had this issue with Hyper-V guests because the host made sure the guests shut down completely before the host shut down or rebooted (at least for the guests with the Hyper-V Guest Components/Integration Services installed).

Per help system: This shutdown delay applies only if the virtual machine has not already shut down before the delay period elapses. If the virtual machine shuts down before that delay time is reached, the next virtual machine starts shutting down. So this means that all my VMs will have at least 5 minutes to shut down, but if they shut down before then, the system moves on to shut down the next VM.

2010-12-30 - FreeNAS Setup

>> I was looking up more stuff on FreeNAS and found this iSCSI target comparison matrix. One interesting thing that I saw on there was Microsoft Windows Storage Server. I just got a Microsoft TechNet Professional subscription which includes licenses for Windows Storage Server 2008 R2 iSCSI. So at least I have another option besides FreeNAS, although I’d rather use FreeNAS since it has a smaller footprint and all I need is iSCSI.

>> There are some confusing things with the FreeNAS download page such as the file name for the 64-bit download, FreeNAS-amd64-LiveCD-0.7.2.5543.iso. Even though it has “amd64” in the name, it can be used with Intel 64-bit CPUs. I had to read a few articles to confirm this. I don’t know why they don’t use “x64” in the name. Another confusing thing was that they also had an IMG file, FreeNAS-amd64-embedded-0.7.2.5543.img. Per FreeNAS doc, the IMG file is for an upgrade. Some of the how-to articles I’ve read don’t clarify anything I’ve just mentioned. I spent more time figuring all that out than it took to actually download and install FreeNAS.

>> My VMs are still shutting down dirty. This might be an issue with VMware Tools being installed on my template VMs. I had an issue with that on my Windows 7 template and had to remove/reinstall VMware Tools. My other VMs seem to work fine with the VMware Tools since I can reboot or shut them down manually. I’ll just remove VMware Tools from the templates and also remove/reinstall VMware Tools on the cloned VMs. They also had Hyper-V Guest Components/Integration Services so I’ll remove that as well.

>> Oh man, what a waste of time I spent on Openfiler. I just read on Sysprobs that Openfiler doesn’t support SCSI-3 persistent reservation disks (required for Windows Server clusters). I should have looked into that before I wasted all those hours trying to set up Openfiler. I just checked this Openfiler forum post and it confirms that Openfiler 2.3 (current version) doesn’t support that feature. And it looks like 2.3 has been out for over two years which is a long time without an update. Openfiler uses rPath Linux, which I never heard of; FreeNAS uses FreeBSD, which at least is a guest OS option in ESXi.

>> I decided not to use the instructions at Simon Day's blog and instead used the instructions at Sysprobs (Simon Day's blog does go one step further and shows how to connect to FreeNAS with the Microsoft iSCSI Initiator). I followed the Sysprobs instructions but added a second NIC to segregate regular LAN traffic from iSCSI. Setting up the second NIC in FreeNAS was a bit confusing. I tried to do it from the console and didn’t understand what “OPT1” was but I eventually figured out that “OPT” means optional (as in an optional interface—an interface other than the LAN interface that’s used for administration) and ‘1’ means the first optional interface. It’s easier to configure the additional NICs from within the Web GUI. You can also rename OPT1 to something else, such as iSCSINet, which makes more sense. Make sure to activate (enable) the interface or you won’t be able to change its settings. See the FreeNAS doc.

It’s annoying that even changing the IP address on the optional NIC requires a reboot, but adding a new disk to the FreeNAS VM doesn’t require a reboot for it to see the disk.

>> Starting at step 18 in the Sysprobs instructions, something didn’t look right to me because he used two disks to create two extents/targets, which isn’t necessary and isn’t something I think most people would do because that’ll be too much overhead. Imagine if you had 50 iSCSI LUNs to create--you wouldn’t want to have to add 50 disks. I did take an EMC Celerra course, so know that iSCSI storage is abstracted so that one disk (or RAID volume) can be sliced up into many LUNs. I decided to read the instructions from the FreeNAS doc instead and there were so many steps because the example uses 4 disks. So I went back to Sysprobs and followed most of the steps, but modified them to use only one disk with multiple extends/targets. I also removed my production subnet from Services|iSCSI Target|Portal Group|Add and left my iSCSI subnet in there. The portal setting basically tells FreeNAS which interfaces it can use for iSCSI traffic. I also removed the production subnet from Initiators --> Authorised network.

It looks like there’s a one-to-one relationship between an iSCSI target and an extent. Basically the target is the interface that the clients connect to and the extent is the actual storage location. Make sure to give your targets descriptive names since they’re what the client will see. Also, when naming objects in FreeNAS, put something at the end of the name that describes what that object is. For example, for an extent, put “ext” at the end of the name. This will help you better understand how all the objects are interconnected when you see them in the GUI. You’ll understand that you need to create/configure the objects in this order:

Disk (formatted with ZFS) --> ZFS virtual device --> ZFS virtual device management --> iSCSI portals (IP addresses/ports that can be used by initiators to access targets) --> iSCSI initiators (allowed hosts/networks) --> extent --> iSCSI target.

I'm not clear on why a ZFS virtual device management object is necessary when a ZFS virtual devices is already created. I guess you can add multiple virtual devices to one virtual device management object, which further abstracts the underlying storage.

>> I know that FreeNAS is free and open source, but the documentation is lacking. For example, the FreeNAS doc I mentioned earlier was taken from a forum member who, by his own admission, is "by no means a FreeNAS expert." After I got everything set up and was more comfortable with FreeNAS, I did go back and read through that FreeNAS doc and it is decent, albeit very lengthy. But there are many areas of the user guide that don't even exist and have this message: You've followed a link to a topic that doesn't exist yet. If permissions allow, you may create it by using the Create this page button. But on the positive side, FreeNAS' documentation is better than Openfiler's, so I'm glad I ended up using FreeNAS.

>> I had to download Microsoft iSCSI Software Initiator Version 2.08 on the servers that I plan to use as my Windows Server 2003/Exchange Server 2003 cluster nodes. Note that the iSCSI initiator is already included with Vista and above. I was able to get both servers connected to the FreeNAS iSCSI target. After I get my AD domain set up, I’ll configure those servers as an Exchange Server 2003 cluster, so hopefully everything works well with that.

>> FreeNAS allows you to delete a ZFS disk before deleting the virtual devices, extents, and targets that depend on that disk. There should be some safety check to not allow this, or at the least give a warning message. For practice, I deleted the ZFS disk I had created, and then deleted the targets, extents, and virtual devices. When I went to set up everything again, I kept getting errors when adding a new object in the ZFS virtual device management. In the System information page in the Web GUI, it actually showed my old disk settings, so I knew something wasn’t right. I guess this was because I didn't delete the virtual device management object before deleting the virtual device. Again, there should have been some type of warning. I ending up deleting the data disk from the FreeNAS VM and created a new and was able to get everything set up again with no issues.

I noticed that the “disk” that shows up in the System information page is the name of the object created in Disks|ZFS|Pools|Management. That same object name is used as the /mnt path when creating an extent. Because of the way I named the objects, it was easy for me to see their relationship. This is how I named my objects:

Data-Disk-01 --> ZFS-VD-01 --> ZFS-VD-01-Mgmt --> chh-excevs-01-Q-Quorum-Ext --> chh-excevs-01-Q-Quorum-Tgt.

I used chh-excevs-01 because that will be the name of my “Exchange Virtual Server” (that’s what an Exchange Server 2003 cluster is called).

>> FreeNAS can encrypt disks. I’m not sure how secure it is, but it does have several modern encryption algorithms. Also ZFS seems to be a really robust file system. Formatting a disk as “ZFS storage pool device” gives you a lot of options.

2010-12-31

I got a call today from someone at VMware. I had left a message at the number for one of the US offices inquring about a package for IT professionals to try VMware products without expiration dates--something like Microsoft TechNet subscriptions. The person who called me back stated that they don't have anything like that. I mentioned that I read in the forums that there used to be a package and he confirmed that there was but it's no longer available. So I asked him what would be the cheapest package I could get with ESXi with VMotion and he said it'd be a few thousand.

Without investing thousands just in the software, I can't really learn VMware well. The evaluation time of 60 days is just not long enough. Yeah, I could learn some stuff in 60 days, but what if I want to go back and reference something after that time? I'd need to reconfigure my vSphere environment every 60 days then. Total BS. Hyper-V is looking real good now. I do have the TechNet subscription, so I'm licensed to use all the Hyper-V features with no expiration.

2011-01-03

>> I had been getting this Event ID 7026 error on my Windows Server 2003 R2 SP3 VMs: The following boot-start or system-start driver(s) failed to load: vmbus. I didn’t find an exact fix for that error, but I found a similar one that worked. I followed the instructions at this VMware KB, but uninstalled the device named Virtual Machine Bus instead and only needed to restart the VM afterwards--I did not need to reinstall VMware Tools.

>> I finally decided to look into why the copy/past functionality between vSphere Client and the VMs don’t work. Apparently several VMware admins in this forum post didn’t know that 4.1 disabled that feature. The post referenced this VMware KB that explains that the feature was “disabled for security reasons” and has instructions for enabling it.

I followed the instructions in the KB and all my active VMs are now able to copy/paste between vSphere Client. I also made the changes in my template VMs, so they should work after I copy them. Fortunately I didn’t have too many VMs set up yet. I should have looked into this issue sooner, but it wasn’t a big deal so that’s why I waited until now. If I had 20 VMs set up, it wouldn’t be fun to make this change manually on each VM. And since I have the free ESXi version, I don’t think any automated method would work, so I’d have to do it manually.

>> The Flexible virtual NIC type did not work on my Windows 7 Ultimate x86 VM without VMware Tools. That NIC type did work on other Windows (2008 R2 x64, 2003, XP) VMs that didn’t have VMware Tools.

>> I got my first (in this lab) Active Directory domain controller set up. I’m using Window Server 2003 R2 SP2. I plan to upgrade to 2008 R2 later on, so I’m doing this as a learning experience. I added both soon-to-be cluster server nodes to the domain and started configuring the prerequisites for the cluster. It’s not difficult, but there are a lot of steps for cluster configuration. I actually never set up a cluster before, but I know the basics of what needs to be done. Regardless, it took me a few hours just to get the prerequisites for Exchange Server 2003 installed (yeah, I jumped ahead) and the iSCSI targets configured and tested. I also ran Exchange ForestPrep and DomainPrep.

>> For some reason, Disk Management keeps on changing the order of the iSCSI disks around after a few reboots. I have the disk drive letters and labels as follows: F: is F-EDB, G: is G-Log, and Q: is Q-Quorum. When I initially added the iSCSI targets on both nodes, they showed up in Disk Management as F: = Disk 1, G: = Disk 2, and Q: = Disk 3. After some reboots, the drive letters and disk numbers might end in the opposite order or some other combination.

The iSCSI disks’ bus number, target ID, and LUN never changed, so I don’t know why the disk numbers get switched around. This happens on both servers and neither server shows any disk errors in the system log and can access the disks fine.

The drive letters and labels always stay the same, so I guess the cluster will work correctly. From what I concluded after reading this MS KB on Managing Disk Ownership in a Windows Server 2003 Cluster, as long as the disk resources fail over correctly, I should be fine since the drive letters are always the same. That’s what I figured would happen, so we’ll see if that’s the case after I set up the cluster and test failover [I did test failover several time s after I set everything up and had no issues--2011-01-12].

As a reminder, I’m using Window Server 2003 R2 SP2 with Microsoft iSCSI Software Initiator Version 2.08 for this cluster.

>> As a test, I was able to access all three iSCSI disks at the same time from both (soon to be) nodes. I figured that this wouldn’t work, and isn't something that one should even attempt, but I tried it anyway. Windows let me write files to the same disks from both nodes, even though the files couldn’t be seen from across nodes. After shutting down both nodes and restarting node 1, node 1 had several NTFS errors in the event log.

I reformatted all three iSCSI disks and everything worked fine again afterwards. I don’t know why Windows even let me write files to the disks; it must have cached the writes, but I did wait a few seconds after writing the files and checked the disks to make sure the files were on there, so the cache should have been flushed by then. I was just doing this as a test, so I didn't bother to look into this any further.

This was only a test. When setting up your cluster, leave all other nodes powered off and only leave the first node powered on. If all nodes are on, you could corrupt the shared disks and have to remove the cluster and reformat the shared disks and start the cluster setup again.

>> Cluster Setup - After getting my FreeNAS iSCSI targets set up and tested with both servers, I followed most of the steps in Guide to Creating and Configuring a Server Cluster under Windows Server 2003 White Pager. It's a decent doc and is pretty easy to follow. Note that this just gets the very basic components of a cluster set up; I still need to setup Exchange on the cluster, which I'll probably do this week.

>> The New Server Cluster Wizard setup detected everything fine and automatically selected Q: as the quorum disk. I didn’t get any errors during setup. After setup, I manually disabled the iSCSINet NIC for cluster use. Besides the cluster group named Cluster Group, two other groups were also created, Group 0 for the F: disk and Group 1 for the G: disk. So basically a group with one disk resource is created for each disk that could possibly be used as a shared cluster disk. You can delete the extra groups and disk resources from within Cluster Administrator if you don’t want them used in the cluster.

>> The cluster service gets installed on each node (the actual files are already included in the base install of Windows Server 2003 Enterprise Edition). Its name is Cluster Service, the executable path is C:\WINDOWS\Cluster\clussvc.exe, and it logs on with the cluster service account.

>> The cluster name is registered in DNS, but a computer account is not created in AD. I had always thought that a separate computer account was created for the cluster itself, but it looks a computer account only gets created for each clustered application (Exchange, SQL, etc) when the application is installed on the cluster.

>> All three iSCSI disks on node 2 appear as unreadable in Disk Management. This is because the cluster service has locked access to these disks since node 1 is the current owner. This is unlike my test earlier, before I created the cluster. In that test, both pre-cluster servers were able to access the iSCSI disks at the same time.

>> The disk numbers in Disk Management are still not remaining consistent, but after failover, I can access them via \\cluster-name\f$, g$, and q$ with no issues. I tried to Google for this issue the other day and didn’t come up with anything, so hopefully it’s not going to cause my Exchange cluster to malfunction.

2011-01-06

>> FreeNAS: I’m not sure if this is possible or not, but I don’t see a way via the Web GUI to add more than one extent to a target. I Googled for this the other day and didn’t see anything. There might be a way to do this via the console, but I don’t need it enough to spend time looking into it.

Another thing that I don’t see in the Web GUI is something that shows used and free space for each extent/target. The virtual device management object itself shows that, but I don’t see it broken down further.

>> I'm curious how the Cluster Administrator program knows which clusters are available to connect to. If I select to browse for a cluster to connect to, my cluster automatically shows up. I don’t have WINS and I’ve checked just about everywhere that I could think of in DNS and AD (even using ADSIEdit to view the configuration partition) and I can’t see how this works. From what I can tell, the only entry for the cluster name is a standard Host (A) entry in DNS. Maybe it works via broadcast to find the clusters on the same subnet.

>> I was a bit confused about how to install and configure Microsoft Distributed Transaction Coordinator (MSDTC), which is a prerequisite for the Exchange cluster. The article Deploying Exchange Server 2003 in a Cluster mentions these two articles for MSDTC:

- How to Install the Microsoft Distributed Transaction Coordinator in a Windows Server 2003 Server Cluster.

- How to Configure Microsoft Distributed Transaction Coordinator on a Windows Server 2003 Cluster.

These articles were what caused the confusion. After reading both and referencing my work’s production Exchange cluster, the first article is the one that you want to follow. It is written specifically for dedicated Exchange clusters.

The second article is just for a general install of MSDTC on clusters that support Exchange and other clustered apps. It’s not even a best practice to configure multiple clustered apps on the same cluster with an app like Exchange, so I don’ know who would do that. I was about to follow the instructions in the second article until I re-read Deploying Exchange Server 2003 in a Cluster.

>> My next step was to follow How to Create an Exchange Virtual Server in a Windows Server Cluster. There are a bunch of sub-steps here, like when setting up the base cluster.

>> I thought there was a special way to install Exchange on a cluster, but it’s just a standard install on both nodes. The nodes won’t show up in Exchange System Manager (ESM) because Exchange setup knows that the servers are cluster nodes, so it doesn’t actually create the servers in the Exchange org. Setup basically installs the Exchange program files, adds the Exchange resource types to the cluster, and creates the Exchange org (if this is the first Exchange server, which it is in this case). The only Exchange-related service that is started on the nodes is Microsoft Exchange Management.

After you install Exchange on all nodes is when you go through the process of creating the Exchange Virtual Server (EVS) cluster group and resources. Note that EVS is the term that’s used to refer to the clustered Exchange application and doesn’t have anything to do with virtualization as it pertains to Hyper-V or VMware, although it can, as in this case since I have my cluster set up within a VMware ESXi host. After creating the EVS is when the Exchange “server” object will actually show up in ESM.

I didn’t read this anywhere, but I went ahead and updated each node to Exchange Server 2003 SP2 before creating the Microsoft Exchange System Attendant cluster resource. I figured that it’s easier to do it now than later after EVS has been configured. As with the Exchange Server 2003 setup, I ran the SP2 update on each node after moving all resources to the other node.

I also created new Exchange administrative and routing groups, Company-AG-01 and Company-RG-01.

>> The AD computer account for the cluster gets created when you create the Network Name cluster resource for the EVS. As noted before, the base Windows Server cluster itself does not have a computer account in AD. The EVS computer account has this description in AD: Server cluster virtual network name account.

>> This seems logical, but make sure that you use Cluster Administrator on one of the two nodes to create all cluster resources. I tried to create the Microsoft Exchange System Attendant resource from the DC and it failed when I brought it online. I thought something was amiss when I created the resource because the creation finished without asking me which administrative and routing groups to put the EVS in. Duh!

>> After the Microsoft Exchange System Attendant resource is successfully created, it’ll create all the other Microsoft Exchange resources, listed below. They’re all dependant on the Microsoft Exchange System Attendant resource. Note that a clustered application will have cluster resources that are tied to actual Windows services.

- Microsoft Exchange HTTP Server Instance

- Microsoft Exchange Information Store

- Microsoft Exchange Message Transfer Agent

- Microsoft Exchange Routing Service

- Microsoft Exchange SMTP Server Instance

- Microsoft Exchange Service Instance

Whenever any instructions mention restarting an Exchange service, do so from within Cluster Administrator by taking the resource (service) offline and then online. Some of the resource and service names don't always match exactly, e.g., Microsoft Exchange MTA Stacks service is the Microsoft Exchange Message Transfer Agent Instance cluster resource.

If you're instructed to "restart the Exchange services or server," you can just take the entire Exchange cluster group (aka EVS) offline and then online. If you actually restart the active node, it would fail over the Exchange group to the other node, so Exchange won’t actually be restarted [The Exchange services on the failing node will stop and the Exchange services on the active node will start, so I guess the Exchange services technically do "restart," but not in the traditional way, so I would still take the EVS offline and then online to really restart Exchange.--2011-01-12].

In ESM, the Servers container will be created under the administrative group selected and the EVS will be created under that.

>> The account used to setup Exchange will be given Exchange Full Administrator permission to the entire Exchange org.

>> I tried to install Microsoft Exchange System Management Tools (Exchange System Manager) on my DC and it required IIS Manager, so I had to install that first. It’s been a while since I had to do this. After that I updated ESM on the DC to Exchange Server 2003 SP2.

This is great! I finally got my Exchange cluster up and have tested failover a few times with no issues.

>> I decided to create three more shared iSCSI disks for the cluster (H, I, and J). So now I have these six disks total:

- chh-excevs-01-F-EDB

- chh-excevs-01-G-Log

- chh-excevs-01-H-MsgTrackLog

- chh-excevs-01-I-MTA

- chh-excevs-01-J-SMTP

- chh-excevs-01-Q-Quorum

>> If a Physical Disk resource is taken offline on the owning node, Disk Management on the owning node will still show the offline disks, but you won’t be able to see their labels and if you try to access the disks, you’ll get a “<drive>:\ is not accessible. The device is not ready” error.

>> I decided to make some additional changes by relocating certain file locations to the shared cluster disks. This isn’t a requirement, but I’ve seen it done in production before, so I wanted to go through the process myself. Here’s what I did:

How to change the Exchange 2003 SMTP Mailroot folder location

- I used method 2, but didn't bother with the diagnostic logging. Also, Adsiedit.dll doesn't need to be registered on Windows Server 2003.

How to change the location of the MTA Database and the MTA Run Directory in Exchange 2000 Server

- I didn’t really need to do this since I won’t have any connectors that use X.400/MTA, but I figured I’d do it for practice.

- Read the cluster info at the bottom of the KB before proceeding!

- I don't know where the mtacheck program is; I searched all drives and didn't find anything named mtacheck. The changes worked anyway and I had no errors in the logs.

How to change the location of the message tracking logs in Exchange Server 2003

- Nothing special here. This one was the easiest to do.

>> VMware: I was messing around with PowerShell again trying to do some basic stuff like Get-VM and output that to a text file named with the current date and time. I found that the custom date-time specifiers are case sensitive, e.g., MM is numeric month and mm is minutes. See the Custom Formatting section at http://technet.microsoft.com/en-us/library/ee692801.aspx.

Here’s how my script looks:

Add-PSSnapin VMware.VimAutomation.Core

$VMwareServer = "chh-vmware-01"

$VMwareUser = "root"

$VMwarePassword = "P@ssw0rd"

$DateToday = Get-Date -format yyyy-MM-dd-HH-mm

Connect-VIServer -Server $VMwareServer -Protocol https -User $VMwareUser -Password $VMwarePassword

Get-VM | Out-File "Get-VM_$DateToday.txt"

Write-Host "**** Press any key to continue. ****"

$x = $host.UI.RawUI.ReadKey("NoEcho,IncludeKeyDown")

>> VMware: I changed the label on my “production” vSwitch and the VMs were still able to connect fine even though the dropdown box under the VMs’ Network Connection setting turned blank. After reboot and even shutdown and reboot, the VMs couldn’t connect back to the network. I had to go into each VM and select the new vSwitch name from the dropdown box. After that everything was fine.

You’d figure that the host would be smart enough to not actually use the label name but some other type of identifier, so that if a vSwitch is renamed, it doesn’t mess anything up. That would be analogous to how Windows uses the SID for user accounts, so changing the username wouldn’t mess up permissions to folders.

>> VMware: I tested to see if an ESXi 4.1 host could run on a VM inside a physical ESXi host and it does work fine. I was able to use the same free version license key that I used on the physical ESXi host. I figured this would work since it works on VMware Workstation, but I tried it anyway since it didn't take long to set up. I did a custom VM setup with the following:

- Virtual Machine version 7

- Guest OS: Other (64-bit)

- 1 processor

- 2048 MB RAM

- E1000 NIC

Now that I know this works, I might try the 60-day eval of the full vSphere to test vMotion and such. I can use my existing FreeNAS VM for shared storage between the ESXi host VMs.

>> I needed to set up a VM router to create separate subnets. I've read about Vyatta before and it seems to be a popular choice for a VM router and there's a nice video (for older versions 3 and 4) here.

I also found Freesco, which, amazingly, just runs off a floppy (virtual floppy image). I set this up since all I needed was basic routing functionality and Freesco is a no-frills, low resource utilizing system. Here's a tutorial on an older version (0.3.4).

>>Freesco router: So hours later (much longer than I thought), I finally got Freesco set up as I wanted. The documentation sucks! It took me hours to track down how to disable the firewall (more on this later). Here’s basically how to set up Freesco:

- Setup all your vSwitches for each subnet that you want Freesco to route between.

- Configure a VM with OS Other Linux (32-bit), 256 MB RAM, no hard drive. Add a flexible NIC for each vSwitch/subnet—do this now before you even set up Freesco. I tried adding additional NICs afterwards and Freesco ended up not see any NICs at all. I eventually went back and started from scratch rather than spend more time to figure out what was wrong.

- Download latest version (currently 0.4.2) from http://freesco.sourceforge.net and extract it.

- Find the file named freesco.042 (no file name extension—the number will change with the version number). This file is the actual floppy image. I’m not sure why they don’t name it with a .img or .flp extension so it’s easy to recognize what the file is. I had to spend some time figuring this out.

- Upload the file to the ESXi datastore in the same location as the VM and rename the file to something like chh-vmrouter-01_freesco.042.flp (chh-vmrouter-01 is the name of the Freesco VM).

- Configure the VM to use that floppy image and connect at power on.

- Start the VM and go through all the settings—there are dozens of prompts for the initial setup. The initial login username/password is root/root. The username for the Web GUI is admin—you were prompted to change its password during initial setup.

- If you need to get back into setup again, just type setup after logging in.

- Use the halt command to put Freesco in a state where it can be powered off; Freesco doesn't accept the shutdown or poweroff commands. Once you get the “system halted” message on the console, you can power off the VM. Again, more time wasted figuring this out.

- For the Freesco interface that’s on the same subnet as your physical/Internet router, make sure to set that physical router’s IP address as the gateway on the interface. I didn’t do that and spent some time troubleshooting. I stumbled upon the gateway setting when looking through the settings and it made sense to add that in. This wasn’t clear in the initial setup, but it makes sense—just basic networking. I didn’t realize this because I normally don’t set up routers. If it was a computer having network issues, the gateway setting would definitely be one of the first things that I’d check.

- On your physical router, set up a static route to your new vSwitch subnets (via Freesco) so that those subnets can access the rest of your home network and the Internet. The static route settings are probably in the advanced network settings section of the router’s admin GUI.

There was still one issue with Freesco that had me stumped. From two other VMs I got the destination port unreachable message below when I pinged Freesco (192.168.1.101). I could access the Freesco Web GUI from both VMs though.

Reply from 192.168.1.101: Destination port unreachable.

I figured it was a firewall issue and it turned out that Freesco has some firewall rules that turn off ICMP responses, among other things.

This is how I currently have Freesco set up:

192.168.1.0/24 (“Internet”) <--> Freesco <--> 10.10.0.0/16

I also couldn’t browse file shares on a host in 10.10.0.0/16 from a host in 192.168.1.0/24. So it looks like Freesco set up some firewall rules with the assumption that 192.168.1.0/24 is the Internet-facing interface. OK, better safe than sorry. Now how do I disable the firewall altogether, since both networks are internal? Searching for “Freesco disable firewall” didn’t yield anything useful.

At the Freesco main logon screen it shows “NAT and firewalling is enabled” so that means that the firewall is always enabled at bootup. I searched and searched for hours on how to disable the firewall. I found some references to ipfwadm and was able to remove all the firewall rules, but I didn’t know how to save the changes. I really just wanted to disable ipfwadm (the firewall) altogether. Something that simple eluded me more for hours until I found the fix myself.

I poked around in the Freesco Web GUI and under Info --> System, I saw this:

----- cat /etc/system.cfg -----

# [System]

ROUTER=ethernet # dialup/leased/ethernet/bridge

HOSTNAME=chh-vmrouter-01 #Router name

DOMAIN=company-ad.sysadmin-e.com #Local domain

ENAMSQ=y #NAT/firewall

OK, so it looks like if I edit the file /etc/system.cfg and change ENAMSQ=y to ENAMSQ=n and rebooted, NAT and firewalling will probably be disabled. So based on that, I did this:

- Logged into the console as root.

- cd /etc

- edit system.cfg

- Change ENAMSQ=y to ENAMSQ=n

- F10 to exit

- y to save changes

- Reboot

Uh, that didn’t work! I knew I was getting close though. At this point, I was getting very frustrated that something so darn simple to do was taking so long. You’d think that Googling for “Freesco disable firewall” would have yielded dozens of helpful instructions--that seems to be the best search term since Freesco uses the word "enabled" so the opposite of that should be some form of "disable."

Next I searched for "Freesco system.cfg" and found http://wejump.ifac.cnr.it/pages/sw/linux/freesco/doc/p11.shtml. There was a reference there for sedit, which isn't currently a supported command in Freesco. I found another command on that page, sysedit and tried that. Just typing in sysedit brought up config.cfg. When I edited, saved, and rebooted, Freesco came up with the firewall disabled. Yes, finally!!! I knew it would be something simple like that.

Basically what happened was that the edit command that I used earlier only edited the config.cfg file in RAM, but didn't save the changes to the floppy/hard disk. This is sort of like how Cisco devices are--there's a running-config in RAM and also the startup-config which is stored in non-volatile RAM (NVRAM). If you want the changes that you make to running-config to be available after reboot, you must merge it into startup-config using the copy run start command. Per Freesco doc, sysedit edits the primary configuration file and copies it afterwards so that changes will survive a reboot.

>>Now on to the next issue. I’ve been getting the disk errors below on the active cluster node about 2 minutes after bootup. This doesn’t happen every time, but most of the time. It doesn’t matter if it’s a cold or warm boot. I’ve disabled write caching on all the iSCSI disks and the error still shows up. It looks like the write caching is set within the shared disks themselves because the settings get carried over to the other node after failover.

At this point, I’m not sure if I need to worry too much about this error because it only happens at bootup; I can leave the active cluster node on for hours and never get a disk error. We’ll see what happens when I start creating mailboxes and sending test messages.

Event Type: Warning

Event Source: Ftdisk

Event Category: Disk

Event ID: 57

Date: 1/6/2011

Time: 7:40:31 AM

User: N/A

Computer: CHH-EXCNODE-01

Description:

The system failed to flush data to the transaction log. Corruption may occur.

For more information, see Help and Support Center at http://go.microsoft.com/fwlink/events.asp.

Data:

0000: 00 00 00 00 01 00 be 00 ......¾.

0008: 02 00 00 00 39 00 04 80 ....9..

0010: 00 00 00 00 10 00 00 80 .......

0018: 00 00 00 00 00 00 00 00 ........

0020: 00 00 00 00 00 00 00 00 ........

----------------------------------------

Event Type: Warning

Event Source: Ntfs

Event Category: None

Event ID: 50

Date: 1/6/2011

Time: 7:41:38 AM

User: N/A

Computer: CHH-EXCNODE-01

Description:

{Delayed Write Failed} Windows was unable to save all the data for the file . The data has been lost. This error may be caused by a failure of your computer hardware or network connection. Please try to save this file elsewhere.

For more information, see Help and Support Center at http://go.microsoft.com/fwlink/events.asp.

Data:

0000: 04 00 04 00 02 00 52 00 ......R.

0008: 00 00 00 00 32 00 04 80 ....2..

0010: 00 00 00 00 10 00 00 80 .......

0018: 00 00 00 00 00 00 00 00 ........

0020: 00 00 00 00 00 00 00 00 ........

0028: 10 00 00 80 ...

----------------------------------------

Event Type: Information

Event Source: Application Popup

Event Category: None

Event ID: 26

Date: 1/6/2011

Time: 7:41:38 AM

User: N/A

Computer: CHH-EXCNODE-01

Description:

Application popup: Windows - Delayed Write Failed : Windows was unable to save all the data for the file \Device\HarddiskVolume7\. The data has been lost. This error may be caused by a failure of your computer hardware or network connection. Please try to save this file elsewhere.

For more information, see Help and Support Center at http://go.microsoft.com/fwlink/events.asp.

>> SyntaxHighlighter is driving me nuts!!! I used the tags in the PowerShell code example and some other parts of today's update and other texts got changed to "formatted." I know it's SyntaxHighlighter because I noticed the issue when I first installed it and mentioned it in my post on SyntaxHighlighter. I'll still use SyntaxHighlighter, but only for specific posts, not in something long like this, and I will only add the tags in after I've finished proofreading and formatting the post. I must have spent an hour today going back and forth correcting the areas that SyntaxHighlighter messed up. Finally, I gave up and removed all the tags and just changed the text color to blue for areas that I wanted to be formatted as code or formatted text. I'm done for today. No more messing with computers for rest of the night!

2011-01-10

>> Exchange: I needed to move the public folder hierarchy from the default First Administrative Group to Company-AG-01, which was the AG that I created when I set up the Exchange org. I followed the instructions in How to move a Top Level Hierarchy to a different Administrative Group. Now First Administrative Group is empty and can be deleted.

>> Exchange: I renamed the default storage group, mailbox store, and public store to the names below.

- CHH-EXCEVS-01-SG01

- CHH-EXCEVS-01-SG01-MB01

- CHH-EXCEVS-01-SG01-PF01

I changed the location of the default mailbox store’s database and streaming database files from F:\EXCHSRVR\MDBDATA\ to G:\CHH-EXCEVS-01-SG01\ and renamed the files (in the same operation) as follows:

- CHH-EXCEVS-01-SG01-MB01.edb

- CHH-EXCEVS-01-SG01-MB01.stm

I changed the location of the public store’s database and streaming database files from F:\EXCHSRVR\MDBDATA\ to G:\CHH-EXCEVS-01-SG01\ and renamed the files (in the same operation) as follows:

- CHH-EXCEVS-01-SG01-PF01.edb

- CHH-EXCEVS-01-SG01-PF01.stm

I changed the location of the storage group’s transaction log and system paths from F:\EXCHSRVR\MDBDATA\ to G:\CHH-EXCEVS-01-SG01\.

On F:\, which is the original location where I had Exchange installed when I set up the EVS, there’s a folder under EXCHSRVR named ExchangeServer_CHH-EXCEVS-01. It looks like the folder contains EVS cluster-specific configuration files. The entire folder only takes up 381 KB, so I’ll leave it alone.

I was getting the error below over 15 minutes after I made the changes above, I only have one DC and it’s in the same site as the EVS, so there shouldn’t be much replication latency. I restarted the EVS and never got the error again.

Event Type: Error

Event Source: MSExchangeSA

Event Category: MAPI Session

Event ID: 9175

Date: 1/7/2011

Time: 9:37:06 AM

User: N/A

Computer: CHH-EXCNODE-01

Description:

The MAPI call 'OpenMsgStore' failed with the following error:

The attempt to log on to the Microsoft Exchange Server computer has failed.

The MAPI provider failed.

Microsoft Exchange Server Information Store

ID no: 8004011d-0512-00000000

I created three more mailbox stores under SG01 and then created a new storage group, SG02 and created four mailbox stores under that. I noticed that before I created the SG02 mailbox stores and mounted them, there were absolutely no files at all under SG02’s transaction log and system path. That makes sense since the storage group doesn’t really have to manipulate any files if there are no stores in it.

>> Cluster: I’ve noticed before on a two-node Windows Server 2003 cluster that the event logs had entries for both nodes in them. I looked that up today and found the KB How to configure event log replication in Windows 2000 and Windows Server 2003 cluster servers. This is a default setting and it's called Event Log Replication, which replicates the System, Application and Security event logs to all cluster nodes and can be disabled via the cluster.exe command.

>> I set up an Internet Information Services server (version 6.0 - Windows Server 2003 R2 SP2) for my wife to use for testing. I made a CNAME entry for intranet that points to the actual IIS server. She wasn’t sure which version of IIS was used at her work, but I knew there were techniques for “fingerprinting” a Web server. I found some info here that gave me the command that I needed.

If I telnet to port 80 of the server and issue the HEAD / HTTP/1.0 command and press enter twice, I get back some info about the IIS server. Note that there’s a space before and after the first /. There are methods that Web server admins can use to block this info, so you might not get back any useful info at all, especially from Internet-facing Web sites.

The default telnet setting won’t echo (display) the command as you type it, so it’s easier to just type HEAD / HTTP/1.0 into Notepad and then copy and paste it into the command prompt window. There’s a way to configure telnet to echo, but I didn’t get it to work and didn’t need it enough to look into it more.

Here’s what I did from a Windows XP command prompt (what I typed in the command prompt window is in black and the server response is in blue):

telnet intranet 80

HEAD / HTTP/1.0

<Enter>

<Enter>

HTTP/1.1 200 OK

Content-Length: 1433

Content-Type: text/html

Content-Location: http://192.168.1.59/iisstart.htm

Last-Modified: Fri, 21 Feb 2003 23:48:30 GMT

Accept-Ranges: bytes

ETag: "09b60bc3dac21:251"

Server: Microsoft-IIS/6.0

MicrosoftOfficeWebServer: 5.0_Pub

X-Powered-By: ASP.NET

Date: Sat, 08 Jan 2011 06:48:08 GMT

Connection: close

--------------------

This is the response from the Web server that’s included with FreeNAS 0.7.2 Sabanda (revision 5543):

HTTP/1.0 302 Found

Status: 302 Moved Temporarily

X-Powered-By: PHP/5.2.14

Set-Cookie: PHPSESSID=16249782aedb184377cd85731513cd6a; path=/

Expires: Thu, 19 Nov 1981 08:52:00 GMT

Cache-Control: no-store, no-cache, must-revalidate, post-check=0, pre-check=0

Pragma: no-cache

Location: login.php

Content-type: text/html

Connection: close

Date: Mon, 10 Jan 2011 15:40:25 GMT

Server: lighttpd/1.4.28

As you can see from the first response, it shows that the intranet Web server is Microsoft-IIS/6.0, which is the IIS version included in Windows Server 2003. And for both, the X-Powered-By part shows whether the Web server runs ASP or PHP.

>> I set up a VM for Fedora 14 32-bit to mess around with Linux. I selected the KDE Plasma Desktop. I actually had to reinstall Fedora because the first time I installed I did a customized setup and split up the disk into two partitions, and the root partition ended up getting low on space after I installed all the updates. I didn’t install VMware Tools, but the mouse is able to go from the VM to my regular desktop without requiring me to press CTRL+ALT.

>> I started setting up a Windows Server 2003 R2 SP2 server to install SQL Server 2005 Enterprise Edition on. I set up two FreeNAS iSCSI disks for it as follows:

- chh-mssql-01-F-MDF For .MDF and .NDF database files.

- chh-mssql-01-G-LDF For .LDF database log files.

Here’s an article on SQL Server 2005 Physical Database Files and Filegroups that describes the physical files related to a database. I’m not a SQL DBA, but I know enough from working with Exchange to know that databases and transaction log files should be kept on separate disk spindles for improved performance and recoverability.

>> I had to install IIS as a prerequisite and then installed SQL Server 2005 Enterprise Edition with all components. I accepted all the defaults and created a service account in AD named svcMSSQL. I also manually added that service account to the local Administrators group on the server.

This is weird, but for some reason F: was not shared out as F$ while G: was shared out as G$ (both are iSCSI disks that were configured exactly the same way). I had to manually share out F$. I’m not getting any (iSCSI) disk errors so far, after several reboots, unlike the Exchange cluster where I regularly get disk errors after reboot.

I had selected the Microsoft MPIO Multipathing Support for iSCSI option when I installed the iSCSI initiator, so I don’t know if that made a difference or the errors only occur on cluster nodes. The network binding order has the iSCSI NIC first, like this:

- iSCSINet

- ProductionNet

>> I updated SQL Server 2005 to SP3, which took it from version 9.0.1399 to 9.0.4035.

Note the default location for the system databases and newly created databases is C:\Program Files\Microsoft SQL Server\MSSQL.1\MSSQL\Data\. I moved all four system databases per this MSDN article. Some notes on that article:

The master DB is the trickiest because there are several steps, so pay attention.

For model, msdb, and tempdb, follow the instructions at the bottom in the section titled Examples. The move of tempdb doesn’t actually involve moving the physical files so you need to go back and delete them from the original location. That is noted in the article.

The move of model and msdb does involve physically moving the .mdf and .ldf files, so make sure you do that. You can run all the commands in the example to logically move model, msdb, and tempdb and then stop the SQL instance, move the physical files, and then restart the SQL instance. If you do them in batch like that, you only need to stop/start SQL once.

I still see two other files in the default location, distmdl.mdf and distmdl.ldf. From what I've read, distmdl is a database used for replication. I don't have replication enabled, and the modified date on both files is 11/24/2008 so that means they’re not actively used. Both files are < 8 MB total, so I’ll leave them alone. The post here states that service packs/hotfixes will fail if those files are missing, even if you don't use replication.

>> iSCSI: I wanted to mention that when setting up a NIC for iSCSI you should configure the TCP/IP properties like you would for a NIC assigned to a cluster private network (see MS Cluster Installation doc). Note that I never hardcoded the speed/duplex on the cluster or iSCSI NICs because the VMware Accelerated AMD PCNet Adapter NIC on the nodes, for whatever reason, only has 100Mbps Full Duplex as the fastest option even though the connection status shows 1.0 Gps as the speed.

As for the network binding order of the cluster, this is what I have:

- ProductionNet

- ClusterNet

- iSCSINet

I had read a few articles about the network binding order and it looks like what I have above is correct. On one of my Google searches for “iscsi initiator bind,” the first two results showed me something I wasn’t aware of and actually helped me fix an issue with the disk errors that the active Exchange cluster node would receive after reboot.

The first result was the MS KB File shares on iSCSI devices may not be re-created when you restart the computer. It mentioned the Bound Volumes/Devices tab in the iSCSI Initiator settings and the BindPersistentVolumes option. What you want to do is persistently bind all your iSCSI disk volumes so that the volumes are available before the application services (Exchange, SQL, etc) that have data on them are started. The iSCSI Initiator basically let's Windows know not to start the application services until all the iSCSI disks are up and running.

Note that the binding of the iSCSI volumes isn’t related to network binding order. From both EVS nodes, I selected to persistently bind all the iSCSI disks and afterwards I never received those same disk errors after the active node boots.

The other search result was an article from Scott Lowe about installing and configuring the Microsoft iSCSI Initiator. He mentions persistent binding in step 7.

Note that Simon Day's blog never mentions persistently binding the volumes, which I think is a critical step.

>> So now that I know about binding iSCSI volumes, I decided to mess with the SQL Server again. Since I had incorrectly selected the Microsoft MPIO Multipathing Support for iSCSI option when I installed the iSCSI Initiator, I uninstalled and reinstalled it. Afterwards I changed the network binding order to how it should be, with ProductionNet before iSCSINet.

After I made those changes, I got the errors below. The errors were because the G: drive wasn’t accessible and hence the SQL service could not access the master database log file. The master database needs to be running because it’s used to keep track of the SQL server and all other databases. Here’s a short article on the importance of the master database.

After I selected to persistently bind all iSCSI volumes (F: and G:), I never received the errors again and SQL would start up normally. To confirm this, I removed the iSCSI persistent bindings and got the error again. So basically the lesson here is that my SQL server started up fine with either one of these settings:

- Persistently bind all iSCSI volumes (recommended setting).

- Network binding order: iSCISNet is before ProductionNet (this was the default, but not the recommended setting).

Event Type: Error

Event Source: MSSQLSERVER

Event Category: (2)

Event ID: 17207

Date: 1/9/2011

Time: 10:28:11 AM

User: N/A

Computer: CHH-MSSQL-01

Description:

FCB::Open: Operating system error 3(The system cannot find the path specified.) occurred while creating or opening file 'G:\mastlog.ldf'. Diagnose and correct the operating system error, and retry the operation.

--------------------

Event Type: Error

Event Source: Service Control Manager

Event Category: None

Event ID: 7024

Date: 1/9/2011

Time: 10:29:43 AM

User: N/A

Computer: CHH-MSSQL-01

Description:

The SQL Server (MSSQLSERVER) service terminated with service-specific error 3417 (0xD59).

>> The Microsoft iSCSI Software Initiator Version 2.X Users Guide has some good info. I didn’t read the whole thing as it gets pretty involved but I did skim through it.

>> Oh, I jumped the gun. The iSCSI binding changes on the cluster nodes actually caused more problems. I get the error below and all of the EVS-specific iSCSI disks are inaccessible on node 2, even though node 2 is the active node, and node 1 is completely shut down.

Node 2 does show that the quorum disk is online, but since none of the EVS disks are online, the EVS is essentially offline. After a few shutdowns and reboots of both nodes, and a few test failovers, the issue seems to have worked itself out. MSCS must not have liked the change in the iSCSI bindings after the cluster was already fully configured. So next time I build a cluster, I’ll make the iSCSI bindings are configured before I create the cluster.

I do still get this error some times on the passive node when it reboots, but since it’s the passive node, it’s not actually a problem.

Event Type: Error

Event Source: MSiSCSI

Event Category: None

Event ID: 103

Date: 1/9/2011

Time: 12:22:11 PM

User: N/A

Computer: CHH-EXCNODE-02

Description:

Timeout waiting for iSCSI persistently bound volumes. If there are any services or applications that use information stored on these volumes then they may not start or may report errors.

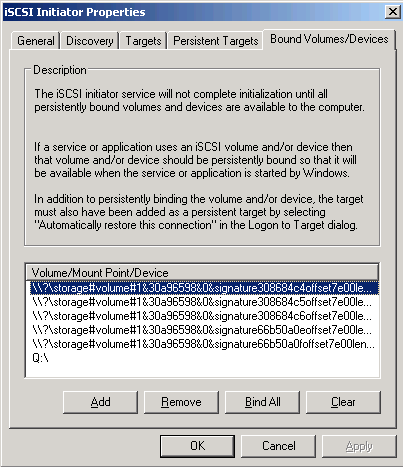

Another thing that I’ve noticed is some inconsistency with the entries in the Bound Volumes/Devices tab. I took some screenshots below with the Snipping Tool that comes with Windows 7 (This is a really convenient tool that I noticed a few months ago but never used until now).

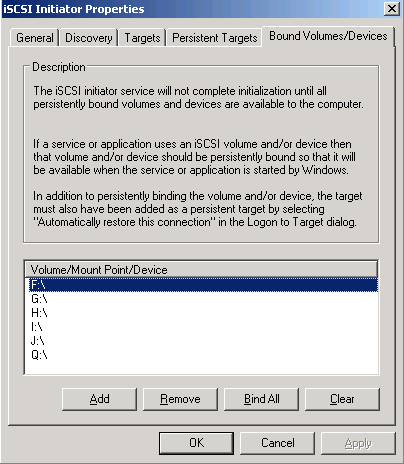

^ Figure 01. Active node with all iSCSI volumes mounted. This looks normal for an active node.

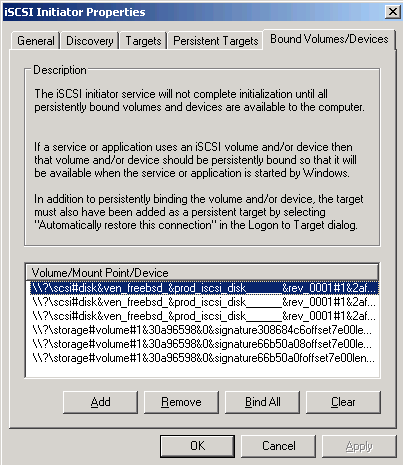

^ Figure 02. Passive node with what looks like, what I’ll term, “iSCSI target paths” and “local disk paths.” The iSCSI target paths are the top three that begin with \\?\scsi and it looks like this is what the OS uses to reference the iSCSI target. The local disk paths are the bottom three that begin with \\?\storage and it looks like this is what the OS uses to reference what’s already added in Disk Management. Note that this is on the passive node, so since it doesn’t own the shared disks, it doesn’t actually have drive letters, so that’s why these paths show up in place of drive letters.

What I don’t understand is why there are two types of paths here. I can’t scroll all the way to the right to see the entire string, but I’m guessing that one set of three consists of the original three disks that I set up before I created the cluster (F:, G:, and Q:) and the other set of three are the disks that I added after I created the cluster (H:, I:, and J:).

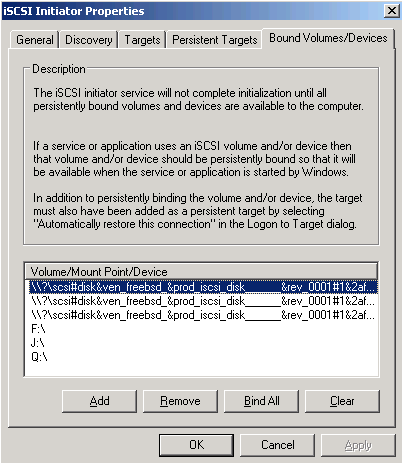

^Figure 03. This is what’s weird. This is the active node, which owns all the disks, but yet the top three aren’t shown as drive letters. This is node 2, which had all the disks added after node 1, per cluster disk setup procedure.

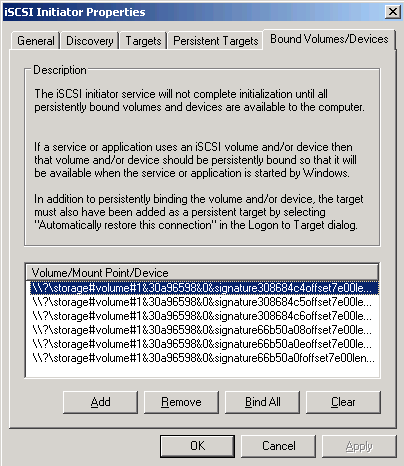

^Figure 04. This is what I think looks normal for a passive node. It has the “local disk paths” listed for all disks.

^Figure 05. This is for a node that only owns the Cluster Group (the other node still owns the EVS). This looks normal since the node owns the Q: - quorum disk it makes sense that Q: would show up. I had manually failed over the Cluster Group to this node and the Q: drive letter automatically showed up in the Bound Volumes/Devices tab.

From the examples above, it looks like there’s a bug with how the Microsoft iSCSI Initiator displays the entries in the Bound Volumes/Devices tab. Everything actually works fine, so it’s just a display name issue. To manually display the correct entries, click on the Clear button and then click on the Bind All button.

>> Group Policy: I should have known better, but I'm a bit rusty on GPOs. I had unchecked the option to define the policy "Password must meet complexity requirements" in the Default Domain Policy so that I could use simple passwords for my test accounts. That didn't work--I had to go back and set the policy to disabled. IIRC, if I don't define the policy, whatever the setting was will remain. Only if a policy is enabled or disabled will its setting actually get changed. Since that setting was enabled by default, I had to disable it to clear out the setting.

>> I updated the Exchange MSCS cluster with all high-priority Windows and Exchange updates as of 2011-01-10 per my instructions here.

2011-01-17

>> I installed a new instance of SQL Server 2005 Enterprise Edition named MSSQLOCS and selected all components. I accepted all the defaults and used the same service account, svcMSSQL. The new instance was installed into C:\Program Files\Microsoft SQL Server\MSSQL.3\.

The new instance is for Office Communications Server 2007 (OCS) (I know this is not the latest version, but the client I work for uses it, so I need to understand it better). This MS TechNet article states that “the SQL Server instance that hosts the Office Communications Server database must be dedicated.” I will not create a separate iSCSI disk for MSSQLOCS but will instead use the existing iSCSI disks and create a folder in each - F:\MSSQL.3 and G:\ MSSQL.3. I also won’t move the system databases since I already went through that exercise with the default instance.

I made a separate post about what I learned working with SQL Server 2005 instances.

>> Since I now have a fully functional Exchange setup and a decent screenshot tool (MS Snipping Tool) I decided to make a separate post with some screenshots of miscellaneous AD settings specific to Exchange Server 2003.

2011-01-30

I've been busier at work and reading up on OCS so I haven't had time to mess around with my VMs as much. I've noticed that the hosts on the 10.10.0.0/16 subnet cannot access the Internet. I think the reason is because I disabled "NAT and firewalling" on Freesco. What I really need to do is disable just the firewall and keep NAT enabled or enable everything but loosen the firewall rules. Right now this isn't a big issue so it's not a high priority for me to fix it.

Yesterday I finally got my OCS environment set up and have IM and on-premise Web conferencing working. I have Office Communications Server 2007 (non-R2) Enterprise Edition in a consolidated configuration with one pool and one front end server hosting all the internal roles. Once again, I know this version is not the latest and is two versions behind what is out now, but the client that I work at uses it, so I needed to learn more about it. I'm going to make a separate post with some notes about OCS deployment.

2012-06-20

After months of not do anything with my ESXi host, the other day I upgraded it to ESXi 5.0 and made a post on that. I'm planning to take the VMware vSphere: Install, Configure, Manage [V5.0] course and VCP exam, so that's why I'm getting back into this. My work is planning a big project to virtualize all remaining physical servers and will pay for the course and exam, so it's a good opportunity for me. Plus, it'll look good on my resume.

June 2nd, 2012 at 7:11 PM

Nice tip on the FreeSco config turning off the firewall. I beat my head on the wall for several hours before figuring it out. I had a premade floppy image that had it enabled, so the trick was creating the vm and making sure NOT to connect any of the NICs at boot. Make the changes, save, reboot.., halt, enable the nics, start uop VM (Router).. and the world was right! Everything worked as expected (prayed for). :).

June 3rd, 2012 at 11:58 AM

Hi Rich: Thanks for checking out my site and commenting. It's always nice to see that someone was able to make use of something I posted.

August 27th, 2013 at 10:13 AM

Hi, and thanks +1 on the FreeSco firewall disabling tip from me too, I could tell I was in for losing a serious amount of time hadn't I stumbled upon your website, thanks!

andrea

August 30th, 2013 at 9:11 AM

Hi Andrea: Thanks for visiting my site. I'm glad you found the info helpful.